The long goodbye to passwords

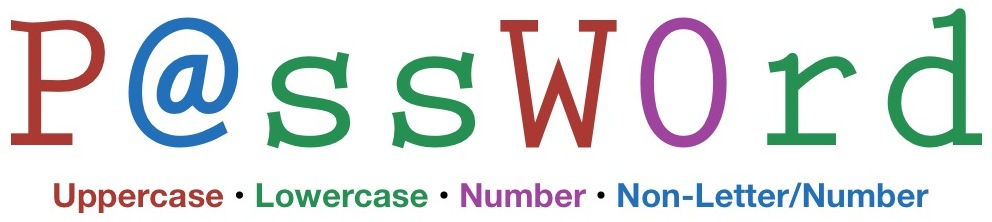

First of all, if what's written above is your password, you need to change it now. I'll wait. Okay, good, now for the rest of the article. Why Passwords Don't Work It's not much of a secret that passwords are not a very good way to secure information. The real problem is when companies try to make users utilize more secure passwords, they end up making the whole system less secure. Does that seem counterintuitive? Here's a scenario. A company wants to make their corporate systems more secure. They decide that the passwords their employees are using are not secure enough, so they institute rules for passwords, which include: Must be 8 characters or longer Must include a lowercase letter Must include an uppercase letter Must include a number Must include a non-letter/number character Must not be the same as the previous password used Must not be the same as the username, or contain the username You've probably run across these rules before. You may not have seen all of them, but you've probably seen most of them, and probably many of them with a single system. In theory, these are all good rules. Where they lead to a less…

Stephen Wolfram never disappoints…

http://youtu.be/_P9HqHVPeik No one will come away from this video amazed at how humble Stephen Wolfram is, but that's not the point of the video. It's an introduction to a forthcoming programming language from Stephen Wolfram, named appropriately enough Wolfram Language, that attempts to build on the past 30 years of his work creating Mathematica, his book A New Kind of Science (humbly referred to on his site as Wolfram Science), and Wolfram|Alpha. It takes the knowledge and algorithms built in to Wolfram|Alpha and makes them available in a symbolic programming language. The demo is fairly entertaining (considering its topic) and it should be very interesting to see what is done with this language once it's available to the general public. For more information, see the Wolfram Language section of the Wolfram Research web site.